Posted by Ian Beer, Project Zero

NOTE: This specific issue was fixed before the launch of Privacy-Preserving Contact Tracing in iOS 13.5 in May 2020.

In

this demo I remotely trigger an unauthenticated kernel memory

corruption vulnerability which causes all iOS devices in radio-proximity

to reboot, with no user interaction. Over the next 30'000 words I'll

cover the entire process to go from this basic demo to successfully

exploiting this vulnerability in order to run arbitrary code on any

nearby iOS device and steal all the user data

Introduction

Quoting @halvarflake's Offensivecon keynote from February 2020:

"Exploits are the closest thing to "magic spells" we experience in the real world: Construct the right incantation, gain remote control over device."

For

6 months of 2020, while locked down in the corner of my bedroom

surrounded by my lovely, screaming children, I've been working on a

magic spell of my own. No, sadly not an incantation to convince the kids

to sleep in until 9am every morning, but instead a wormable

radio-proximity exploit which allows me to gain complete control over

any iPhone in my vicinity. View all the photos, read all the email, copy

all the private messages and monitor everything which happens on there

in real-time.

The takeaway from this project should not be: no one will spend six months of their life just to hack my phone, I'm fine.

Instead, it should be: one

person, working alone in their bedroom, was able to build a capability

which would allow them to seriously compromise iPhone users they'd come

into close contact with.

Imagine

the sense of power an attacker with such a capability must feel. As we

all pour more and more of our souls into these devices, an attacker can

gain a treasure trove of information on an unsuspecting target.

What's more, with directional antennas, higher transmission powers and sensitive receivers the range of such attacks can be considerable.

I

have no evidence that these issues were exploited in the wild; I found

them myself through manual reverse engineering. But we do know that

exploit vendors seemed to take notice of these fixes. For example, take

this tweet from Mark Dowd, the co-founder of Azimuth Security, an Australian "market-leading information security business":

This tweet from @mdowd on May 27th 2020 mentioned a double free in BSS reachable via AWDL

The

vulnerability Mark is referencing here is one of the vulnerabilities I

reported to Apple. You don't notice a fix like that without having a

deep interest in this particular code.

This Vice article from 2018 gives a good overview of Azimuth and why they might be interested in

such vulnerabilities. You might trust that Azimuth's judgement of their

customers aligns with your personal and political beliefs, you might

not, that's not the point. Unpatched vulnerabilities aren't like

physical territory, occupied by only one side. Everyone can exploit an

unpatched vulnerability and Mark Dowd wasn't the only person to start

tweeting about vulnerabilities in AWDL.

This

has been the longest solo exploitation project I've ever worked on,

taking around half a year. But it's important to emphasize up front that

the teams and companies supplying the global trade in cyberweapons like

this one aren't typically just individuals working alone. They're

well-resourced and focused teams of collaborating experts, each with

their own specialization. They aren't starting with absolutely no clue

how bluetooth or wifi work. They also potentially have access to

information and hardware I simply don't have, like development devices, special cables, leaked source code, symbols files and so on.

Of

course, an iPhone isn't designed to allow people to build capabilities

like this. So what went so wrong that it was possible? Unfortunately,

it's the same old story. A fairly trivial buffer overflow programming

error in C++ code in the kernel parsing untrusted data, exposed to

remote attackers.

In

fact, this entire exploit uses just a single memory corruption

vulnerability to compromise the flagship iPhone 11 Pro device. With just

this one issue I was able to defeat all the mitigations in order to

remotely gain native code execution and kernel memory read and write.

Relative

to the size and complexity of these codebases of major tech companies,

the sizes of the security teams dedicated to proactively auditing their

product's source code to look for vulnerabilities are very small.

Android and iOS are complete custom tech stacks. It's not just kernels

and device drivers but dozens of attacker-reachable apps, hundreds of

services and thousands of libraries running on devices with customized

hardware and firmware.

Actually

reading all the code, including every new line in addition to the

decades of legacy code, is unrealistic, at least with the division of

resources commonly seen in tech where the ratio of security engineers to

developers might be 1:20, 1:40 or even higher.

To

tackle this insurmountable challenge, security teams rightly place a

heavy emphasis on design level review of new features. This is sensible:

getting stuff right at the design phase can help limit the impact of

the mistakes and bugs which will inevitably occur. For example, ensuring

that a new hardware peripheral like a GPU can only ever access a

restricted portion of physical memory helps constrain the worst-case outcome if the GPU is compromised by an attacker.

The attacker is hopefully forced to find an additional vulnerability to

"lengthen the exploit chain", having to use an ever-increasing number

of vulnerabilities to hack a single device. Retrofitting constraints

like this to already-shipping features would be much harder, if not

impossible.

In

addition to design-level reviews, security teams tackle the complexity

of their products by attempting to constrain what an attacker might be

able to do with a vulnerability. These are mitigations. They take many

forms and can be general, like stack cookies or application specific, like Structure ID in JavaScriptCore.

The guarantees which can be made by mitigations are generally weaker

than those made by design-level features but the goal is similar: to

"lengthen the exploit chain", hopefully forcing an attacker to find a

new vulnerability and incur some cost.

The

third approach widely used by defensive teams is fuzzing, which

attempts to emulate an attacker's vulnerability finding process with

brute force. Fuzzing is often misunderstood as an effective method to

discover easy-to-find vulnerabilities or "low-hanging fruit". A more

precise description would be that fuzzing is an effective method to

discover easy-to-fuzz vulnerabilities. Plenty of vulnerabilities which a

skilled vulnerability researcher would consider low-hanging fruit can

require reaching a program point that no fuzzer today will be able to

reach, no matter the compute resources used.

The

problem for tech companies and certainly not unique to Apple, is that

while design review, mitigations, and fuzzing are necessary for building

secure codebases, they are far from sufficient.

Fuzzers

cannot reason about code in the same way a skilled vulnerability

researcher can. This means that without concerted manual effort,

vulnerabilities with a relatively low cost-of-discovery remain fairly

prevalent. A major focus of my work over the last few years had been

attempting to highlight that the iOS codebase, just like any other major

modern operating system, has a high vulnerability density. Not only

that, but there's a high density of "good bugs", that is,

vulnerabilities which enable the creation of powerful weird machines.

This

notion of "good bugs" is something that offensive researchers

understand intuitively but something which might be hard to grasp for

those without an exploit development background. Thomas Dullien's weird machines paper provides the best introduction to the notion of weird machines and

their applicability to exploitation. Given a sufficiently complex state

machine operating on attacker-controlled input, a "good bug" allows the

attacker-controlled input to instead become "code", with the "good bug"

introducing a new, unexpected state transition into a new, unintended

state machine. The art of exploitation then becomes the art of

determining how one can use vulnerabilities to introduce sufficiently

powerful new state transitions such that, as an end goal, the

attacker-supplied input becomes code for a new, weird machine capable of

arbitrary system interactions.

It's

with this weird machine that mitigations will be defeated; even a

mitigation without implementation flaws is usually no match for a

sufficiently powerful weird machine. An attacker looking for

vulnerabilities is looking specifically for weird machine primitives.

Their auditing process is focused on a particular attack-surface and

particular vulnerability classes. This stands in stark contrast to a

product security team with responsibility for every possible attack

surface and every vulnerability class.

As

things stand now in November 2020, I believe it's still quite possible

for a motivated attacker with just one vulnerability to build a

sufficiently powerful weird machine to completely, remotely compromise

top-of-the-range iPhones. In fact, the parts of that process which are

hardest probably aren't those which you might expect, at least not

without an appreciation for weird machines.

Vulnerability

discovery remains a fairly linear function of time invested. Defeating

mitigations remains a matter of building a sufficiently powerful weird

machine. Concretely, Pointer Authentication Codes (PAC) meant I could no

longer take the popular direct shortcut to a very powerful weird

machine via trivial program counter control and ROP or JOP. Instead I

built a remote arbitrary memory read and write primitive which in

practise is just as powerful and something which the current

implementation of PAC, which focuses almost exclusively on restricting

control-flow, wasn't designed to mitigate.

Secure

system design didn't save the day because of the inevitable tradeoffs

involved in building shippable products. Should such a complex parser

driving multiple, complex state machines really be running in kernel

context against untrusted, remote input? Ideally, no, and this was

almost certainly flagged during a design review. But there are tight

timing constraints for this particular feature which means isolating the

parser is non-trivial. It's certainly possible, but that would be a

major engineering challenge far beyond the scope of the feature itself.

At the end of the day, it's features which sell phones and this feature

is undoubtedly very cool; I can completely understand the judgement call

which was made to allow this design despite the risks.

But

risk means there are consequences if things don't go as expected. When

it comes to software vulnerabilities it can be hard to connect the dots

between those risks which were accepted and the consequences. I don't

know if I'm the only one who found these vulnerabilities, though I'm the

first to tell Apple about them and work with Apple to fix them. Over

the next 30'000 words I'll show you what I was able to do with a single

vulnerability in this attack surface and hopefully give you a new or

renewed insight into the power of the weird machine.

I

don't think all hope is lost; there's just an awful lot more left to

do. In the conclusion I'll try to share some ideas for what I think

might be required to build a more secure iPhone.

If you want to follow along you can find details attached to issue 1982 in the Project Zero issue tracker.

Vulnerability discovery

In 2018 Apple shipped an iOS beta build without stripping function name symbols from the kernelcache. While this was almost certainly an error, events

like this help researchers on the defending side enormously. One of the

ways I like to procrastinate is to scroll through this enormous list of

symbols, reading bits of assembly here and there. One day I was looking

through IDA's cross-references to memmove with no particular target in mind when something jumped out as being worth a closer look:

IDA Pro's cross references window shows a large number of calls to memmove. A callsite in IO80211AWDLPeer::parseAwdlSyncTreeTLV is highlighted

Having

function names provides a huge amount of missing context for the

vulnerability researcher. A completely stripped 30+MB binary blob such

as the iOS kernelcache can be overwhelming. There's a huge amount of

work to determine how everything fits together. What bits of code are

exposed to attackers? What sanity checking is happening and where? What

execution context are different parts of the code running in?

In this case this particular driver is also available on MacOS, where function name symbols are not stripped.

There are three things which made this highlighted function stand out to me:

1) The function name:

IO80211AWDLPeer::parseAwdlSyncTreeTLV

At

this point, I had no idea what AWDL was. But I did know that TLVs

(Type, Length, Value) are often used to give structure to data, and

parsing a TLV might mean it's coming from somewhere untrusted. And the 80211 is a giveaway that this probably has something to do with WiFi. Worth a closer look. Here's the raw decompilation from Hex-Rays which we'll clean up later:

__int64 __fastcall IO80211AWDLPeer::parseAwdlSyncTreeTLV(__int64 this, __int64 buf)

{

const void *v3; // x20

_DWORD *v4; // x21

int v5; // w8

unsigned __int16 v6; // w25

unsigned __int64 some_u16; // x24

int v8; // w21

__int64 v9; // x8

__int64 v10; // x9

unsigned __int8 *v11; // x21

v3 = (const void *)(buf + 3);

v4 = (_DWORD *)(this + 1203);

v5 = *(_DWORD *)(this + 1203);

if ( ((v5 + 1) & 0xFFFFu) <= 0xA )

v6 = v5 + 1;

else

v6 = 10;

some_u16 = *(unsigned __int16 *)(buf + 1) / 6uLL;

if ( (_DWORD)some_u16 == v6 )

{

some_u16 = v6;

}

else

{

IO80211Peer::logDebug(

this,

0x8000000000000uLL,

"Peer %02X:%02X:%02X:%02X:%02X:%02X: PATH LENGTH error hc %u calc %u \n",

*(unsigned __int8 *)(this + 32),

*(unsigned __int8 *)(this + 33),

*(unsigned __int8 *)(this + 34),

*(unsigned __int8 *)(this + 35),

*(unsigned __int8 *)(this + 36),

*(unsigned __int8 *)(this + 37),

v6,

some_u16);

*v4 = some_u16;

v6 = some_u16;

}

v8 = memcmp((const void *)(this + 5520), v3, (unsigned int)(6 * some_u16));

memmove((void *)(this + 5520), v3, (unsigned int)(6 * some_u16));

Definitely

looks like it's parsing something. There's some fiddly byte

manipulation; something which sort of looks like a bounds check and an

error message.

2) The second thing which stands out is the error message string:

"Peer %02X:%02X:%02X:%02X:%02X:%02X: PATH LENGTH error hc %u calc %u\n"

Any kind of LENGTH error sounds like fun to me. Especially when you look a little closer...

3) The control flow graph.

Reading the code a bit more closely it appears that although the log message contains the word "error" there's nothing which is being treated as an error condition here. IO80211Peer::logDebug isn't a fatal logging API, it just logs the message string. Tracing back the length value which is passed to memmove, regardless of which path is taken we still end up with what looks like an arbitrary u16 value from the input buffer (rounded down to the nearest multiple of 6) passed as the length argument to memmove.

Can

it really be this easy? Typically, in my experience, bugs this shallow

in real attack surfaces tend to not work out. There's usually a length

check somewhere far away; you'll spend a few days trying to work out why

you can't seem to reach the code with a bad size until you find it and

realize this was a CVE from a decade ago. Still, worth a try.

But what even is this attack surface?

A first proof-of-concept

A bit of googling later we learn that awdl is a type of welsh poetry,

and also an acronym for an Apple-proprietary mesh networking protocol

probably called Apple Wireless Direct Link. It appears to be used by AirDrop amongst other things.

The first goal is to determine whether we can really trigger this vulnerability remotely.

We can see from the casts in the parseAwdlSyncTreeTLV method that the type-length-value objects have a single-byte type then a two-byte length followed by a payload value.

In IDA selecting the function name and going View -> Open subviews -> Cross references (or pressing 'x') shows IDA only found one caller of this method:

IO80211AWDLPeer::actionFrameReport

...

case 0x14u:

if (v109[20] >= 2)

goto LABEL_126;

++v109[0x14];

IO80211AWDLPeer::parseAwdlSyncTreeTLV(this, bytes);

So 0x14 is probably the type value, and v109 looks like it's probably counting the number of these TLVs.

Looking in the list of function names we can also see that there's a corresponding BuildSyncTreeTlv method. If we could get two machines to join an AWDL network, could we

just use the MacOS kernel debugger to make the SyncTree TLV very large

before it's sent?

Yes,

you can. Using two MacOS laptops and enabling AirDrop on both of them I

used a kernel debugger to edit the SyncTree TLV sent by one of the

laptops, which caused the other one to kernel panic due to an

out-of-bounds memmove.

If you're interested in exactly how to do that take a look at the original vulnerability report I sent to Apple on November 29th 2019. This vulnerability was fixed as

CVE-2020-3843 on January 28th 2020 in iOS 13.1.1/MacOS 10.15.3.

Our

journey is only just beginning. Getting from here to running an implant

on an iPhone 11 Pro with no user interaction is going to take a

while...

Prior Art

There are a series of papers from the Secure Mobile Networking Lab at TU Darmstadt in Germany (also known as SEEMOO) which look at AWDL. The researchers

there have done a considerable amount of reverse engineering (in

addition to having access to some leaked Broadcom source code) to

produce these papers; they are invaluable to understand AWDL and pretty

much the only resources out there.

The first paper One Billion Apples’ Secret Sauce: Recipe for the Apple Wireless Direct Link Ad hoc Protocol covers the format of the frames used by AWDL and the operation of the channel-hopping mechanism.

The second paper A

Billion Open Interfaces for Eve and Mallory: MitM, DoS, and Tracking

Attacks on iOS and macOS Through Apple Wireless Direct Link focuses more on Airdrop, one of the OS features which uses AWDL. This

paper also examines how Airdrop uses Bluetooth Low Energy advertisements

to enable AWDL interfaces on other devices.

The research group wrote an open source AWDL client called OWL (Open Wireless Link). Although I was unable to get OWL to work it was

nevertheless an invaluable reference and I did use some of their frame

definitions.

What is AWDL?

AWDL

is an Apple-proprietary mesh networking protocol designed to allow

Apple devices like iPhones, iPads, Macs and Apple Watches to form ad-hoc

peer-to-peer mesh networks. Chances are that if you own an Apple device

you're creating or connecting to these transient mesh networks multiple

times a day without even realizing it.

If

you've ever used Airdrop, streamed music to your Homepod or Apple TV

via Airplay or used your iPad as a secondary display with Sidecar then

you've been using AWDL. And even if you haven't been using those

features, if people nearby have been then it's quite possible your

device joined the AWDL mesh network they were using anyway.

AWDL isn't a custom radio protocol; the radio layer is WiFi (specifically 802.11g and 802.11a).

Most

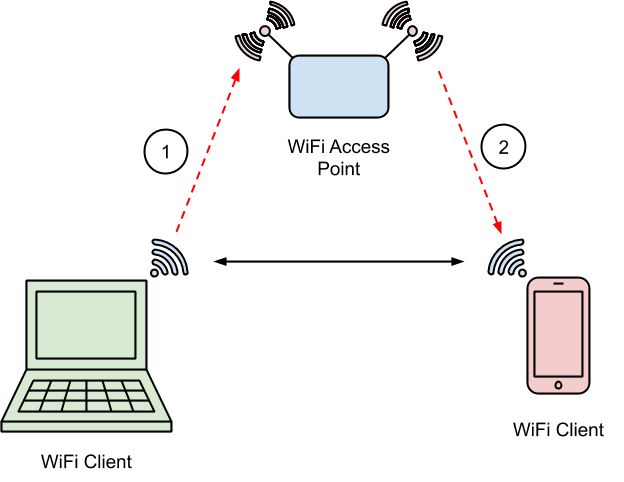

people's experience with WiFi involves connecting to an infrastructure

network. At home you might plug a WiFi access point into your modem

which creates a WiFi network. The access point broadcasts a network name

and accepts clients on a particular channel.

To

reach other devices on the internet you send WiFi frames to the access

point (1). The access point sends them to the modem (2) and the modem

sends them to your ISP (3,4) which sends them to the internet:

The topology of a typical home network

To

reach other devices on your home WiFi network you send WiFi frames to

the access point and the access point relays them to the other devices:

WiFi clients communicate via an access point, even if they are within WiFi range of each other

In

reality the wireless signals don't propagate as straight lines between

the client and access point but spread out in space such that the two

client devices may be able to see the frames transmitted by each other

to the access point.

If

WiFi client devices can already send WiFi frames directly to each

other, then why have the access point at all? Without the complexity of

the access point you could certainly have much more magical experiences

which "just work", requiring no physical setup.

There are various protocols for doing just this, each with their own tradeoffs. Tunneled Direct Link Setup (TDLS) allows two devices already on the same WiFi network to negotiate

a direct connection to each other such that frames won't be relayed by

the access point.

Wi-Fi Direct allows two devices not already on the same network to establish an encrypted peer-to-peer Wi-Fi network, using WPS to bootstrap a WPA2-encrypted ad-hoc network.

Apple's

AWDL doesn't require peers to already be on the same network to

establish a peer-to-peer connection, but unlike Wi-Fi Direct, AWDL has

no built-in encryption. Unlike TDLS and Wi-Fi Direct, AWDL networks can

contain more than two peers and they can also form a mesh network

configuration where multiple hops are required.

AWDL

has one more trick up its sleeve: an AWDL client can be connected to an

AWDL mesh network and a regular AP-based infrastructure network at the

same time, using only one Wi-Fi chipset and antenna. To see how that

works we need to look a little more at some Wi-Fi fundamentals.

WiFi fundamentals

There are over 20 years of WiFi standards spanning different frequency ranges of the electromagnetic spectrum, from as low as 54MHz in 802.11af up to over 60GHz in 802.11ad.

Such networks are quite esoteric and consumer equipment uses

frequencies near 2.4 Ghz or 5 Ghz. Ranges of frequencies are split into

channels: for example in 802.11g channel 6 means a 22 Mhz range between 2.426 GHz and 2.448 GHz.

Newer

5 GHz standards like 802.11ac allow for wider channels up to 160 MHz; 5

Ghz channel numbers therefore encode both the center frequency and

channel width. Channel 44 is a 20 MHz range between 5.210 Ghz and 5.230

Ghz whereas channel 46 is a 40 Mhz range which starts at the same lower

frequency as channel 44 of 5.210 GHz but extends up to 5.250 GHz.

AWDL

typically sends and receives frames on channel 6 and 44. How does that

work if you're also using your home WiFi network on a different channel?

Channel Hopping and Time Division Multiplexing

In

order to appear to be connected to two separate networks on separate

frequencies at the same time, AWDL-capable devices split time into 16ms

chunks and tell the WiFi controller chip to quickly switch between the

channel for the infrastructure network and the channel being used by

AWDL:

A

typical AWDL channel hopping sequence, alternating between small

periods on AWDL social channels and longer periods on the AP channel

The

actual channel sequence is dynamic. Peers broadcast their channel

sequences and adapt their own sequence to match peers with which they

wish to communicate. The periods when an AWDL peer is listening on an

AWDL channel are known as Availability Windows.

In

this way the device can appear to be connected to the access point

whilst also participating in the AWDL mesh at the same time. Of course,

frames might be missed from both the AP and the AWDL mesh but the

protocols are treating radio as an unreliable transport anyway so this

only really has an impact on throughput. A large part of the AWDL

protocol involves trying to synchronize the channel switching between

peers to improve throughput.

The SEEMOO labs paper has a much more detailed look at the AWDL channel hopping mechanism.

AWDL frames

These are the first software-controlled fields which go over the air in a WiFi frame:

struct ieee80211_hdr {

uint16_t frame_control;

uint16_t duration_id;

struct ether_addr dst_addr;

struct ether_addr src_addr;

struct ether_addr bssid_addr;

uint16_t seq_ctrl;

} __attribute__((packed));

The first word contains fields which define the type of this frame.

These are broadly split into three frame families: Management, Control

and Data. The building blocks of AWDL use a subtype of Management frames

called Action frames.

The

address fields in an 802.11 header can have different meanings

depending on the context; for our purposes the first is the destination

device MAC address, the second is the source device MAC and the third is

the MAC address of the infrastructure network access point or BSSID.

Since

AWDL is a peer-to-peer network and doesn't use an access point, the

BSSID field of an AWDL frame is set to the hard-coded AWDL BSSID MAC of 00:25:00:ff:94:73.

It's this BSSID which AWDL clients are looking for when they're trying

to find other peers. Your router won't accidentally use this BSSID

because Apple owns the 00:25:00 OUI.

The

format of the bytes following the header depends on the frame type. For

an Action frame the next byte is a category field. There are a large number of categories which allow devices to exchange all kinds of information. For example category 5 covers various types of radio measurements like noise histograms.

The special category value 0x7f defines this frame as a vendor-specific action frame meaning that the

next three bytes are the OUI of the vendor responsible for this custom

action frame format.

Apple owns the OUI 0x00 0x17 0xf2 and this is the OUI used for AWDL action frames. Every byte in the

frame after this is now proprietary, defined by Apple rather than an

IEEE standard.

The SEEMOO labs team have done a great job reversing the AWDL action frame format and they developed a wireshark dissector.

AWDL Action frames have a fixed-sized header followed by a variable length collection of TLVs:

The layout of fields in an AWDL frame: 802.11 header, action frame header, AWDL fixed header and variable length AWDL payload

Each TLV has a single-byte type followed by a two-byte length which is the length of the variable-sized payload in bytes.

There

are two types of AWDL action frame: Master Indication Frames (MIF) and

Periodic Synchronization Frames (PSF). They differ only in their type field and the collection of TLVs they contain.

An

AWDL mesh network has a single master node decided by an election

process. Each node broadcasts a MIF containing a master metric

parameter; the node with the highest metric becomes the master node. It

is this master node's PSF timing values which should be adopted as the

true timing values for all the other nodes to synchronize to; in this

way their availability windows can overlap and the network can have a

higher throughput.

Frame processing

Back in 2017, Project Zero researcher Gal Beniamini published a seminal 5-part blog post series entitled Over The Air where he exploited a vulnerability in the Broadcom WiFi chipset to gain

native code execution on the WiFi controller, then pivoted via an iOS

kernel bug in the chipset-to-Application Processor interface to achieve

arbitrary kernel memory read/write.

In

that case, Gal targeted a vulnerability in the Broadcom firmware when

it was parsing data structures related to TDLS. The raw form of these

data structures was handled by the chipset firmware itself and never

made it to the application processor.

In

contrast, for AWDL the frames appear to be parsed in their entirety on

the Application Processor by the kernel driver. Whilst this means we can

explore a lot of the AWDL code, it also means that we're going to have

to build the entire exploit on top of primitives we can build with the

AWDL parser, and those primitives will have to be powerful enough to

remotely compromise the device. Apple continues to ship new mitigations

with each iOS release and hardware revision, and we're of course going

to target the latest iPhone 11 Pro with the largest collection of these

mitigations in place.

Can

we really build something powerful enough to remotely defeat kernel

pointer authentication just with a linear heap overflow in a WiFi frame

parser? Defeating mitigations usually involves building up a library of

tricks to help build more and more powerful primitives. You might start

with a linear heap overflow and use it to build an arbitrary read, then

use that to help build an arbitrary bit flip primitive and so on.

I've

built a library of tricks and techniques like this for doing local

privilege escalations on iOS but I'll have to start again from scratch

for this brand new attack surface.

A brief tour of the AWDL codebase

The first two C++ classes to familiarize ourselves with are IO80211AWDLPeer and IO80211AWDLPeerManager. There's one IO80211AWDLPeer object for each AWDL peer which a device has recently received a frame from. A background timer destroys inactive IO80211AWDLPeer objects. There's a single instance of the IO80211AWDLPeerManager which is responsible for orchestrating interactions between this device and other peers.

Note that although we have some function names from the iOS 12 beta 1 kernelcache and the MacOS IO80211Family driver we don't have object layout information. Brandon Azad pointed

out that the MacOS prelinked kernel image does contain some structure

layout information in the __CTF.__ctf section which can be parsed by the dtrace ctfdump tool. Unfortunately this seems to only contain structures from the open source XNU code.

The sizes of OSObject-based IOKit objects can easily be determined statically but the names and types of

individual fields cannot. One of the most time-consuming tasks of this

whole project was the painstaking process of reverse engineering the

types and meanings of a huge number of the fields in these objects. Each IO80211AWDLPeer object is almost 6KB; that's a lot of potential fields. Having structure layout information would probably have saved months.

If

you're a defender building a threat model don't interpret this the

wrong way: I would assume any competent real-world exploit development

team has this information; either from images or devices with full debug symbols they have acquired with or without Apple's consent, insider access, or

even just from monitoring every single firmware image ever publicly

released to check whether debug symbols were released by accident. Larger groups could even have people dedicated to building custom reversing tools.

Six

years ago I had hoped Project Zero would be able to get legitimate

access to data sources like this. Six years later and I am still

spending months reversing structure layouts and naming variables.

We'll take IO80211AWDLPeerManager::actionFrameInput as the point where untrusted raw AWDL frame data starts being parsed.

There is actually a separate, earlier processing layer in the WiFi

chipset driver but its parsing is minimal.

Each frame received while the device is listening on a social channel which was sent to the AWDL BSSID ends up at actionFrameInput, wrapped in an mbuf structure. Mbufs are an anachronistic data structure used for wrapping collections of networking buffers. The mbuf API is the stuff of nightmares, but that's not in scope for this blogpost.

The mbuf buffers are concatenated to get a contiguous frame in memory for parsing, then IO80211PeerManager::findPeer is called, passing the source MAC address from the received frame:

IO80211AWDLPeer*

IO80211PeerManager::findPeer(struct ether_addr *peer_mac)

If an AWDL frame has recently been received from this source MAC then this function returns a pointer to an existing IO80211AWDLPeer structure representing the peer with that MAC. The IO80211AWDLPeerManager uses a fairly complicated priority queue data structure called IO80211CommandQueue to store pointers to these currently active peers.

If the peer isn't found in the IO80211AWDLPeerManager's queue of peers then a new IO80211AWDLPeer object is allocated to represent this new peer and it's inserted into the IO80211AWDLPeerManager's peers queue.

Once a suitable peer object has been found the IO80211AWDLPeerManager then calls the actionFrameReport method on the IO80211AWDLPeer so that it can handle the action frame.

This

method is responsible for most of the AWDL action frame handling and

contains most of the untrusted parsing. It first updates some timestamps

then reads various fields from TLVs in the frame using the IO80211AWDLPeerManager::getTlvPtrForType method to extract them directly from the mbuf. After this initial

parsing comes the main loop which takes each TLV in turn and parses it.

First each TLV is passed to IO80211AWDLPeer::tlvCheckBounds. This method has a hardcoded list of specific minimum and maximum TLV lengths for some of the supported TLV types. For types not explicitly listed it enforces

a maximum length of 1024 bytes. I mentioned earlier that I often

encounter code constructs which look like shallow memory corruption only

to later discover a bounds check far away. This is exactly that kind of

construct, and is in fact where Apple added a bounds check in the

patch.

Type 0x14 (which has the vulnerability in the parser) isn't explicitly listed in tlvCheckBounds so it gets the default upper length limit of 1024, significantly larger

than the 60 byte buffer allocated for the destination buffer in the IO80211AWDLPeer structure.

This

pattern of separating bounds checks away from parsing code is fragile;

it's too easy to forget or not realize that when adding code for a new

TLV type it's also a requirement to update the tlvCheckBounds function. If this pattern is used, try to come up with a way to enforce

that new code must explicitly declare an upper bound here. One option

could be to ensure an enum is used for the type and wrap the tlvCheckBounds method in a pragma to temporarily enable clang's -Wswitch-enum warning as an error:

#pragma clang diagnostic push

#pragma diagnostic error "-Wswitch-enum"

IO80211AWDLPeer::tlvCheckBounds(...) {

switch(tlv->type) {

case type_a:

...;

case type_b:

...;

}

}

#pragma clang diagnostic pop

This causes a compilation error if the switch statement doesn't have an explicit case statement for every value of the tlv->type enum.

Static analysis tools like Semmle can also help here. The EnumSwitch class can be used like in this example code to check whether all enum values are explicitly handled.

If the tlvCheckBounds checks pass then there is a switch statement with a case to parse each supported TLV:

SyncTree vulnerability in context

Here's a cleaned up decompilation of the relevant portions of the parseAwdlSyncTreeTLV method which contains the vulnerability:

int

IO80211AWDLPeer::parseAwdlSyncTreeTLV(awdl_tlv* tlv)

{

u64 new_sync_tree_size;

u32 old_sync_tree_size = this->n_sync_tree_macs + 1;

if (old_sync_tree_size >= 10 ) {

old_sync_tree_size = 10;

}

if (old_sync_tree_size == tlv->len/6 ) {

new_sync_tree_size = old_sync_tree_size;

} else {

new_sync_tree_size = tlv->len/6;

this->n_sync_tree_macs = new_sync_tree_size;

}

memcpy(this->sync_tree_macs, &tlv->val[0], 6 * new_sync_tree_size);

...

sync_tree_macs is a 60-byte inline array in the IO80211AWDLPeer structure, at offset +0x1648. That's enough space to store 10 MAC addresses. The IO80211AWDLPeer object is 0x16a8 bytes in size which means it will be allocated in the kalloc.6144 zone.

tlvCheckBounds will enforce a maximum value of 1024 for the length of the SyncTree

TLV. The TLV parser will round that value down to the nearest multiple

of 6 and copy that number of bytes into the sync_tree_macs array at +0x1648.

This will be our memory corruption primitive: a linear heap buffer

overflow in 6-byte chunks which can corrupt all the fields in the IO80211AWDLPeer object past +0x16a8 and then a few hundred bytes off of the end of the kalloc.6144 zone chunk. We can easily cause IO80211AWDLPeer objects to be allocated next to each other by sending AWDL frames from a

large number of different spoofed source MAC addresses in quick

succession. This gives us four rough primitives to think about as we

start to find a path to exploitation:

1) Corrupting fields after the sync_tree_macs array in the IO80211AWDLPeer object:

Overflowing into the fields at the end of the peer object

2) Corrupting the lower fields of an IO80211AWDLPeer object groomed next to this one:

Overflowing into the fields at the start of a peer object next to this one

3) Corrupting the lower bytes of another object type we can groom to follow a peer in kalloc.6144:

Overflowing into a different type of object next to this peer in the same zone

4)

Meta-grooming the zone allocator to place a peer object at a zone

boundary so we can corrupt the early bytes of an object from another

zone:

Overflowing into a different type of object in a different zone

We'll revisit these options in greater detail soon.

Getting on the air

At

this point we understand enough about the AWDL frame format to start

trying to get controlled, arbitrary data going over the air and reach

the frame parsing entrypoint.

I

tried for a long time to get the open source academic OWL project to

build and run successfully, sadly without success. In order to start

making progress I decided to write my own AWDL client from scratch.

Another approach could have been to write a MacOS kernel module to

interact with the existing AWDL driver, which may have simplified some

aspects of the exploit but also made others much harder.

I started off using an old Netgear WG111v2 WiFi adapter I've had for many years which I knew could do monitor mode and frame injection, albeit only on 2.4 Ghz channels. It uses an rtl8187 chipset. Since I wanted to use the linux drivers for these adapters I bought a Raspberry Pi 4B to run the exploit.

In the past I've used Scapy for crafting network packets from scratch. Scapy can craft and inject arbitrary 802.11 frames, but since we're going to need a lot of control over injection timing it might not be the best tool. Scapy uses libpcap to interact with the hardware to inject raw frames so I took a look at libpcap. Some googling later I found this excellent tutorial example which demonstrates exactly how to use libpcap to inject a raw 802.11 frame. Let dissect exactly what's required:

Radiotap

We've seen the structure of the data in 802.11 AWDL frames; there will be an ieee80211 header at the start, an Apple OUI, then the AWDL action frame header

and so on. If our WiFi adaptor were connected to a WiFi network, this

might be enough information to transmit such a frame. The problem is

that we're not connected to any network. This means we need to attach

some metadata to our frame to tell the WiFi adaptor exactly how it

should get this frame on to the air. For example, what channel and with

what bandwidth and modulation scheme should it use to inject the frame?

Should it attempt re-transmits until an ACK is received? What signal

strength should it use to inject the frame?

Radiotap is a standard for expressing exactly this type of frame metadata, both

when injecting frames and receiving them. It's a slightly fiddly

variable-sized header which you can prepend on the front of a frame to

be injected (or read off the start of a frame which you've sniffed.)

Whether

the radiotap fields you specify are actually respected and used depends

on the driver you are using - a driver may choose to simply not allow

userspace to specify many aspects of injected frames. Here's an example

radiotap header captured from a AWDL frame using the built-in MacOS

packet sniffer on a MacBook Pro. Wireshark has parsed the binary

radiotap format for us:

Wireshark parses radiotap headers in pcaps and shows them in a human-readable form

From

this radiotap header we can see a timestamp, the data rate used for

transmission, the channel (5.220 GHz which is channel 44) and the

modulation scheme (OFDM). We can also see an indication of the strength of the received signal and a measure of the noise.

The tutorial gave the following radiotap header:

static uint8_t u8aRadiotapHeader[] = {

0x00, 0x00, // version

0x18, 0x00, // size

0x0f, 0x80, 0x00, 0x00, // included fields

0x00, 0x00, 0x00, 0x00, 0x00, 0x00, 0x00, 0x00, //timestamp

0x10, // add FCS

0x00,// rate

0x00, 0x00, 0x00, 0x00, // channel

0x08, 0x00, // NOACK; don't retry

};

With knowledge of radiotap and a basic header it's not too tricky to get an AWDL frame on to the air using the pcap_inject interface and a wireless adaptor in monitor mode:

int pcap_inject(pcap_t *p, const void *buf, size_t size)

Of

course, this doesn't immediately work and with some trial and error it

seems that the rate and channel fields aren't being respected. Injection

with this adaptor seems to only work at 1Mbps, and the channel

specified in the radiotap header won't be the one used for injection.

This isn't such a problem as we can still easily set the wifi adaptor

channel manually:

iw dev wlan0 set channel 6

Injection

at 1Mbps is exceptionally slow but this is enough to get a test AWDL

frame on to the air and we can see it in Wireshark on another device in

monitor mode. But nothing seems to be happening on a target device. Time

for some debugging!

Debugging with DTrace

The SEEMOO labs paper had already suggested setting some MacOS boot arguments to enable more

verbose logging from the AWDL kernel driver. These log messages were

incredibly helpful but often you want more information than you can get

from the logs.

For the initial report PoC I showed how to use the MacOS kernel debugger to modify an AWDL frame

which was about to be transmitted. Typically, in my experience, the

MacOS kernel debugger is exceptionally unwieldy and unreliable. Whilst

you can technically script it using lldb's python bindings, I wouldn't recommend it.

Apple does have one trick up their sleeve however; DTrace!

Where the MacOS kernel debugger is awful in my opinion, dtrace is

exceptional. DTrace is a dynamic tracing framework originally developed

by Sun Microsystems for Solaris. It's been ported to many platforms

including MacOS and ships by default. It's the magic behind tools such

as Instruments.

DTrace allows you to hook in little snippets of tracing code almost

wherever you want, both in userspace programs, and, amazingly, the

kernel. Dtrace has its quirks. Hooks are written in the D language which

doesn't have loops and the scoping of variables takes a little while to

get your head around, but it's the ultimate debugging and reversing

tool.

For example, I used this dtrace script on MacOS to log whenever a new IO80211AWDLPeer object was allocated, printing it's heap address and MAC address:

self char* mac;

fbt:com.apple.iokit.IO80211Family:_ZN15IO80211AWDLPeer21withAddressAndManagerEPKhP22IO80211AWDLPeerManager:entry {

self->mac = (char*)arg0;

}

fbt:com.apple.iokit.IO80211Family:_ZN15IO80211AWDLPeer21withAddressAndManagerEPKhP22IO80211AWDLPeerManager:return

printf("new

AWDL peer: %02x:%02x:%02x:%02x:%02x:%02x allocation:%p",

self->mac[0], self->mac[1], self->mac[2], self->mac[3],

self->mac[4], self->mac[5], arg1);

}

Here

we're creating two hooks, one which runs at a function entry point and

the other which runs just before that same function returns. We can use

the self-> syntax to pass variables between the entry point and return point and

DTrace makes sure that the entries and returns match up properly.

We have to use the mangled C++ symbol in dtrace scripts; using c++filt we can see the demangled version:

$ c++filt -n _ZN15IO80211AWDLPeer21withAddressAndManagerEPKhP22IO80211AWDLPeerManager

IO80211AWDLPeer::withAddressAndManager(unsigned char const*, IO80211AWDLPeerManager*)

The

entry hook "saves" the pointer to the MAC address which is passed as

the first argument; associating it with the current thread and stack

frame. The return hook then prints out that MAC address along with the

return value of the function (arg1 in a return hook is the function's return value) which in this case is the address of the newly-allocated IO80211AWDLPeer object.

With

DTrace you can easily prototype custom heap logging tools. For example

if you're targeting a particular allocation size and wish to know what

other objects are ending up in there you could use something like the

following DTrace script:

/* some globals with values */

BEGIN {

target_size_min = 97;

target_size_max = 128;

}

fbt:mach_kernel:kalloc_canblock:entry {

self->size = *(uint64_t*)arg0;

}

fbt:mach_kernel:kalloc_canblock:return

/self->size >= target_size_min ||

self->size <= target_size_max /

{

printf("target allocation %x = %x", self->size, arg1);

stack();

}

The expression between the two /'s allows the hook to be conditionally executed. In this case limiting it to cases where kalloc_canblock has been called with a size between target_size_min and target_size_max. The built-in stack() function will print a stack trace, giving you some insight into the

allocations within a particular size range. You could also use ustack() to continue that stack trace in userspace if this kernel allocation happened due to a syscall for example.

DTrace

can also safely dereference invalid addresses without kernel panicking,

making it very useful for prototyping and debugging heap grooms. With

some ingenuity it's also possible to do things like dump linked-lists

and monitor for the destruction of particular objects.

I'd

really recommend spending some time learning DTrace; once you get your

head around its esoteric programming model you'll find it an immensely

powerful tool.

Reaching the entrypoint

Using

DTrace to log stack frames I was able to trace the path legitimate AWDL

frames took through the code and determine how far my fake AWDL frames

made it. Through this process I discovered that there are, at least on

MacOS, two AWDL parsers in the kernel: the main one we've already seen

inside the IO80211Family kext and a second, much simpler one in the driver for the particular

chipset being used. There were three checks in this simpler parser which

I was failing, each of which meant my fake AWDL frames never made it to

the IO80211Family code:

Firstly, the source MAC address was being validated. MAC addresses actually contain multiple fields:

The

first half of a MAC address is an OUI. The least significant bit of the

first byte defines whether the address is multicast or unicast. The

second bit defines whether the address is locally administered or

globally unique.

Diagram used under CC BY-SA 2.5 By Inductiveload, modified/corrected by Kju - SVG drawing based on PNG

uploaded by User:Vtraveller. This can be found on Wikipedia here

The source MAC address 01:23:45:67:89:ab from the libpcap example was an unfortunate choice as it has the

multicast bit set. AWDL only wants to deal with unicast addresses and

rejects frames from multicast addresses. Choosing a new MAC address to

spoof without that bit set solved this problem.

The

next check was that the first two TLVs in the variable-length payload

section of the frame must be a type 4 (sync parameters) then a type 6

(service parameters.)

Finally the channel number in the sync parameters had to match the channel on which the frame had actually been received.

With those three issues fixed I was finally able to get arbitrary controlled bytes to appear at the actionFrameReport method on a remote device and the next stage of the project could begin.

A framework for an AWDL client

We've

seen that AWDL uses time division multiplexing to quickly switch

between the channels used for AWDL (typically 6 and 44) and the channel

used by the access point the device is connected to. By parsing the AWDL

synchronization parameters TLV in the PSF and MIF frames sent by AWDL

peers you can calculate when they will be listening in the future. The

OWL project uses the linux libev library to try to only transmit at the right moment when other peers will be listening.

There are a few problems with this approach for our purposes:

Firstly,

and very importantly, this makes targeting difficult. AWDL action

frames are (usually) sent to a broadcast destination MAC address (ff:ff:ff:ff:ff:ff.) It's a mesh network and these frames are meant to be used by all the peers for building up the mesh.

Whilst

exploiting every listening AWDL device in proximity at the same time

would be an interesting research problem and make for a cool demo video,

it also presents many challenges far outside the initial scope. I

really needed a way to ensure that only devices I controlled would

process the AWDL frames I sent.

With

some experimentation it turned out that all AWDL frames can also be

sent to unicast addresses and devices would still parse them. This

presents another challenge as the AWDL virtual interface's MAC address

is randomly generated each time the interface is activated. For testing

on MacOS it suffices to run:

ifconfig awdl0

to

determine the current MAC address. For iOS it's a little more involved;

my chosen technique has been to sniff on the AWDL social channels and

correlate signal strength with movements of the device to determine its

current AWDL MAC.

There's

one other important difference when you send an AWDL action frame to a

unicast address: if the device is currently listening on that channel

and receives the frame, it will send an ACK.

This turns out to be extremely helpful. We will end up building some

quite complex primitives using AWDL action frames, abusing the protocol

to build a weird machine. Being able to tell whether a target device

really received a frame or not means we can treat AWDL frames more like a

reliable transport medium. For the typical usage of AWDL this isn't

necessary; but our usage of AWDL is not going to be typical.

This ACK-sniffing model will be the building block for our AWDL frame injection API.

Acktually receiving ACKs

Just

because the ACKs are coming over the air now doesn't mean we actually

see them. Although the WiFi adaptor we're using for injection must be

technically capable of receiving ACKs (as they are a fundamental

protocol building block), being able to see them on the monitor

interface isn't guaranteed.

A screenshot of wireshark showing a spoofed AWDL frame followed by an Acknowledgement from the target device.

The

libpcap interface is quite generic and doesn't have any way to indicate

that a frame was ACKed or not. It might not even be the case that the

kernel driver is aware whether an ACK was received. I didn't really want

to delve into the injection interface kernel drivers or firmware as

that was liable to be a major investment in itself so I tried some other

ideas.

ACK

frames in 802.11g and 802.11a are timing based. There's a short window

after each transmitted frame when the receiver can ACK if they received

the frame. It's for this reason that ACK frames don't contain a source

MAC address. It's not necessary as the ACK is already perfectly

correlated with a source device due to the timing.

If

we also listen on our injection interface in monitor mode we might be

able to receive the ACK frames ourself and correlate them. As mentioned,

not all chipsets and drivers actually give you all the management

frames.

For

my early prototypes, I managed to find a pair in my box of WiFi

adaptors where one would successfully inject on 2.4ghz channels at 1Mbps

and the other would successfully sniff ACKs on that channel at 1Mbps.

1Mbps

is exceptionally slow; a relatively large AWDL frame ends up being on

the air for 10ms or more at that speed, so if your availability window

is only a few ms you're not going to get many frames per second. Still,

this was enough to get going.

The

injection framework I built for the exploit uses two threads, one for

frame injection and one for ACK sniffing. Frames are injected using the try_inject function, which extracts the spoofed source MAC address and signals to

the second sniffing thread to start looking for an ACK frame being sent

to that MAC.

Using a pthread condition variable,

the injecting thread can then wait for a limited amount of time during

which the sniffing thread may or may not see the ACK. If the sniffing

thread does see the ACK it can record this fact then signal the

condition variable. The injection thread will stop waiting and can check

whether the ACK was received.

Take a look at try_inject_internal in the exploit for the mutex and condition variable setup code for this.

There's a wrapper around try_inject called inject which repeatedly calls try_inject until it succeeds. These two methods allow us to do all the timing sensitive and insensitive frame injection we need.

These two methods take a variable number of pkt_buf_t pointers; a simple custom variable-sized buffer wrapper object. The

advantage of this approach is that it allows us to quickly prototype new

AWDL frame structures without having to write boilerplate code. For

example, this is all the code required to inject a basic AWDL frame and

re-transmit it until the target receives it:

inject(RT(),

WIFI(dst, src),

AWDL(),

SYNC_PARAMS(),

SERV_PARAM(),

PKT_END());

Investing

a little bit of time building this API saved a lot of time in the long

run and made it very easy to experiment with new ideas.

With an injection framework finally up and running we can start to think about how to actually exploit this vulnerability!

The new challenges on A12/A13

The Apple A12 SOC found in the iPhone Xr/Xs contained the first commercially-available ARM CPU implementing the ARM-8.3 optional Pointer Authentication feature. This was released in September 2018. This post from Project Zero researcher Brandon Azad covers PAC and its implementation by Apple in great detail, as does this presentation from the 2019 LLVM developers meeting.

Its

primary use is as a form of Control Flow Integrity. In theory all

function pointers present in memory should contain a Pointer

Authentication Code in their upper bits which will be verified after the

pointer is loaded from memory but before it's used to modify control

flow.

In almost all cases this PAC instrumentation will be added by the compiler. There's a really great document from the clang team which goes into great detail about the implementation of PAC from a

compiler point of view and the security tradeoffs involved. It has a

brilliant section on the threat model of PAC which frankly and honestly

discusses the cases where PAC may help and the cases where it won't.

Documentation like this should ship with every mitigation.

Having

a publicly documented threat model helps everyone understand the

intentions behind design decisions and the tradeoffs which were

necessary. It helps build a common vocabulary and helps to move

discussions about mitigations away from a focus on security through

obscurity towards a qualitative appraisal of their strengths and

weaknesses.

Concretely, the first hurdle PAC will throw up is that it will make it harder to forge vtable pointers.

All OSObject-derived objects have virtual methods. IO80211AWDLPeer, like almost all IOKit C++ classes derives from OSObject so the first field is a vtable pointer. As we saw in the heap-grooming sketches earlier, by spraying IO80211AWDLPeer objects then triggering the heap overflow we can easily gain control of a vtable pointer. This technique was used in Mateusz Jurczyk's Samsung MMS remote exploit and Natalie Silvanovich's remote WebRTC exploit this year.

Kernel virtual calls have gone from looking like this on A11 and below:

LDR X8, [X20] ; load vtable pointer

LDR X8, [X8,#0x38] ; load function pointer from vtable

MOV X0, X20

BLR X8 ; call virtual function

to this on A12 and above:

LDR X8, [X20] ; load vtable pointer

; authenticate vtable pointer using A-family data key and zero context

; if authentication passes, add 0x38 to vtable pointer, load value

; at that address into X9 and store X8+0x38 back to X8 without a PAC

LDRAA X9, [X8,#0x38]!

; overwrite the upper 16 bits of X8 with the constant 0xFFFC

; this is a hash of the mangled symbol; constant at each callsite

MOVK X8, #0xFFFC,LSL#48

MOV X0, X20

; authenticate virtual function pointer with A-family instruction key

; and context value where the upper 16 bits are a hash of the

; virtual function prototype and the lower 48 bits are the runtime

; address of the virtual function pointer in the vtable

BLRAA X9, X8

Diagrammatic view of a C++ virtual call in ARM64e showing the keys and discriminators used

What does that mean in practice?

If

we don't have a signing gadget, then we can't trivially point a vtable

pointer to an arbitrary address. Even if we could, we'd need a data and

instruction family signing gadget with control over the discriminator.

We can swap a vtable pointer with any other A-family 0-context data key signed

pointer, however the virtual function pointer itself is signed with a

context value consisting of the address of the vtable entry and a hash

of the virtual function prototype. This means we can't swap virtual

function pointers from one vtable into another one (or more likely into a

fake vtable to which we're able to get an A-family data key signed

pointer.)

We can swap one vtable pointer for another one to cause a type confusion,

however every virtual function call made through that vtable pointer

would have to be calling a function with a matching prototype hash. This

isn't so improbable; a fundamental building block of object-oriented

programming in C++ is to call functions with matching prototypes but

different behaviour via a vtable. Nevertheless you'd have to do some

thinking to come up with a generic defeat using this approach.

An

important observation is that the vtable pointers themselves have no

address diversity; they're signed with a zero-context. This means that

if we can disclose a signed vtable pointer for an object of type A at

address X, we can overwrite the vtable pointer for another object of

type A at a different address Y.

This

might seem completely trivial and uninteresting but remember: we only

have a linear heap buffer overflow. If the vtable pointer had address

diversity then for us to be able to safely corrupt fields after the

vtable in an adjacent object we'd have to first disclose the exact

vtable pointer following the object which we can overflow out of.

Instead we can disclose any vtable pointer for this type and it will be

valid.

The clang design doc explains why this is:

It

is also known that some code in practice copies objects containing

v-tables with memcpy, and while this is not permitted formally, it is

something that may be invasive to eliminate.

Right at the end of this document they also say "attackers can be devious."

On A12 and above we can no longer trivially point the vtable pointer to

a fake vtable and gain arbitrary PC control fairly easily. Guess we'll

have to get devious :)

Some initial ideas

Initially

I continued using the iOS 12 beta 1 kernelcache when searching for

exploitation primitives and performing the initial reversing to better

understand the layout of the IO80211AWDLPeer object. This turned out to be a major mistake and a few weeks were spent following unproductive leads:

In the iOS 12 beta 1 kernelcache the fields following the sync_tree_macs buffer seemed uninteresting, at least from the perspective of being

able to build a stronger primitive from the linear overflow. For this

reason my initial ideas looked at corrupting the fields at the beginning

of an IO80211AWDLPeer object which I could place subsequently in memory, option 2 which we saw earlier:

Spoofing

many source MAC addresses makes allocating neighbouring IO80211AWDLPeer

objects fairly easy. The synctree buffer overflow then allows

corrupting the lower fields of an IO80211AWDLPeer in addition to the

upper fields

Almost

certainly we're going to need some kind of memory disclosure primitive

to land this exploit. My first ideas for building a memory disclosure

primitive involved corrupting the linked-list of peers. The data

structure holding the peers is in fact much more complex than a linked

list, it's more like a priority queue with some interesting behaviours

when the queue is modified and a distinct lack of safe unlinking and the like. I'd expect iOS to start slowly migrating to using

data-PAC for linked-list integrity, but for now this isn't the case. In

fact these linked lists don't even have the most basic safe-unlinking

integrity checks yet.

The start of an IO80211AWDLPeer object looks like this:

All IOKit objects inheriting from OSObject have a vtable and a reference count as their first two fields. In an IO80211AWDLPeer these are followed by a hash_bucket identifier, a peer_list flink and blink, the peer's MAC address and the peer's peer_manager pointer.

My

first ideas revolved around trying to partially corrupt a peer

linked-list pointer. In hindsight, there's an obvious reason why this

doesn't work (which I'll discuss in a bit), but let's remain

enthusiastic and continue on for now...

Looking through the places where the linked list of peers seemed to be used it looked like perhaps the IO80211AWDLPeerManager::updatePeerListBloomFilter method might be interesting from the perspective of trying to get data leaked back to us. Let's take a look at it:

IO80211AWDLPeerManager::updatePeerListBloomFilter(){

int n_peers = this->peers_list.n_elems;

if (!this->peer_bloom_filters_enabled) {

return 0;

}

bzero(this->bloom_filter_buf, 0xA00uLL);

this->n_macs_in_bloom_filter = 0;

IO80211AWDLPeer* peer = this->peers_list.head;

int n_peers_in_filter = 0;

for (;

n_peers_in_filter < n_peers && n_peers_in_filter < 0x100;

n_peers_in_filter++) {

this->bloom_filter_macs[n_peers_in_filter] = peer.mac;

peer = peer->flink;

}

bloom_filter_create(10*(n_peers_in_filter+7) & 0xff8,

0,

n_peers_in_filter,

this->bloom_filter_macs,

this->bloom_filter_buf);

if (n_peers_in_filter){

this->updateBroadcastMI(9, 1, 0);

}

return 0;

}

From the IO80211AWDLPeerManager it's reading the peer list head pointer as well as a count of the

number of entries in the peer list. For each entry in the list it's

reading the MAC address field into an array then builds a bloom filter from that buffer.

The

interesting part here is that the list traversal is terminated using a

count of elements which have been traversed rather than by looking for a

termination pointer value at the end of the list (eg a NULL or a

pointer back to the head element.) This means that potentially if we

could corrupt the linked-list pointer of the second-to-last peer to be

processed we could point it to a fake peer and get data at a controlled

address added into the bloom filter. updateBroadcastMI looks like it will add that bloom filter data to the Master Indication

frame in the bloom filter TLV, meaning we could get a bloom filter

containing data read from a controlled address sent back to us.

Depending on the exact format of the bloom filter it would probably be

possible to then recover at least some bits of remote memory.

It's

important to emphasize at this point that due to the lack of a remote

KASLR leak and also the lack of a remote PAC signing gadget or vtable

disclosure, in order to corrupt the linked-list pointer of an adjacent

peer object we have no option but to corrupt its vtable pointer with an

invalid value. This means that if any virtual methods were called on

this object, it would almost certainly cause a kernel panic.

The

first part of trying to get this to work was to work out how to build a

suitable heap groom such that we could overflow from a peer into the

second-to-last peer in the list which would be processed

Both

the linked-list order and the virtual memory order need to be groomed

to allow a targeted partial overflow of the final linked-list pointer to

be traversed. In this layout we'd need to overflow from 2 into 6 to

corrupt the final pointer from 6 to 7.

There

is a mitigation from a few years ago in play here which we'll have to

work around; namely the randomization of the initial zone freelists

which adds a slight element of randomness to the order of the

allocations you will get for consecutive calls to kalloc for the same size. The randomness is quite minimal however so the trick

here is to be able to pad your allocations with "safe" objects such

that even though you can't guarantee that you always overflow into the

target object, you can mostly guarantee that you'll overflow into that

object or a safe object.

We need two primitives: Firstly, we need to understand the semantics of the list. Secondly, we need some safe objects.

The peer list

With

a bit of reversing we can determine that the code which adds peers to

the list doesn't simply add them to the start. Peers which are first

seen on a 2.4GHz channel (6) do get added this way, but peers first seen

on a 5GHz channel (44) are inserted based on their RSSI (received signal strength indication - a unitless value approximating

signal strength.) Stronger signals mean the peer is probably physically

closer to the device and will also be closer to the start of the list.

This gives some nice primitives for manipulating the list and ensuring

we know where peers will end up.

Safe objects

The

second requirement is to be able to allocate arbitrary, safe objects.

Our ideal heap grooming/shaping objects would have the following

primitives:

1) arbitrary size

2) unlimited allocation quantity

3) allocation has no side effects

4) controlled contents

5) contents can be safely corrupted

6) can be free'd at an arbitrary, controlled point, with no side effects

Of

course, we're completely limited to objects we can force to be

allocated remotely via AWDL so all the tricks from local kernel

exploitation don't work. For example, I and others have used various

forms of mach messages, unix pipe buffers, OSDictionaries, IOSurfaces and more to build these primitives. None of these are going to work at

all. AWDL is sufficiently complicated however that after some reversing I

found a pretty good candidate object.

Service response descriptor (SRD)

This is my reverse-engineered definition of the services response descriptor TLV (type 2):

{ u8 type

u16 len

u16 key_len

u8 key_val[key_len]

u16 value_total_size

u16 fragment_offset

u8 fragment[len-key_len-6] }

It has two variable-sized fields: key_val and fragment. The key_length field defines the length of the key_val buffer, and the length of fragment is the remaining space left at the end of the TLV. The parser for this TLV makes a kalloc allocation of val_length, an arbitrary u16. It then memcpy's from fragment into that kalloc buffer at offset frag_offset:

The service_response technique gives us a powerful heap grooming primitive

I

believe this is supposed to be support for receiving out-of-order

fragments of service request responses. It gives us a very powerful

primitive for heap grooming. We can choose an arbitrary allocation size

up to 64k and write an arbitrary amount of controlled data to an

arbitrary offset in that allocation and we only need to provide the

offset and content bytes.

This

also gives us a kind of amplification primitive. We can bundle quite a

lot of these TLVs in one frame allowing us to make megabytes of

controlled heap allocations with minimal side effects in just one AWDL

frame.

This

SRD technique in fact almost completely meets criteria 1-5 outlined

above. It's almost perfect apart from one crucial point; how can we free

these allocations?

Through

static reversing I couldn't find how these allocations would be free'd,

so I wrote a dtrace script to help me find when those exact kalloc

allocations were free'd. Running this dtrace script then running a test

AWDL client sending SRDs I saw the allocation but never the free. Even

disabling the AWDL interface, which should clean up most of the

outstanding AWDL state, doesn't cause the allocation to be freed.

This

is possibly a bug in my dtrace script, but there's another theory: I

wrote another test client which allocated a huge number of SRDs. This

allocated a substantial amount of memory, enough to be visible using zprint. And indeed, running that test client repeatedly then running zprint you can observe the inuse count of the target zone getting larger and larger. Disabling AWDL

doesn't help, neither does waiting overnight. This looks like a pretty

trivial memory leak.

Later

on we'll examine the cause of this memory leak but for now we have a

heap allocation primitive which meets criteria 1-5, that's probably good

enough!

A first attempt at a useful corruption

I

managed to build a heap groom which gets the linked-list and heap

objects set up such that I can overflow into the second-to-last peer

object to be processed:

By

surrounding peer objects with a sufficient number of safe objects we

can ensure that the linear corruption either hits the right peer object

or a safe object

The

trick is to ensure that the ratio of safe objects to peers is

sufficiently high that you can be (reasonably) sure that the two target

peers will only be next to each other or next to safe objects (they

won't be next to other peers in the list.) Even though you may not be

able to force the two peers to be in the correct order as shown in the

diagram, you can at least make the corruption safe if they aren't, then

try again.

When writing the code to build the SyncTree TLV I realized I'd made a huge oversight...

My initial idea had been to only partially overwrite a valid linked-list pointer element:

If we could partially overflow the peer_list_flink pointer we could potentially move it to point it somewhere nearby. In

this illustration by moving it down by 8 bytes we could potentially get

some bytes of a peer_list_blink added to the peer MACs bloom filter. A partial overwrite doesn't

directly give a relative add or subtract primitive, but with some heap

grooming overwriting the lower 2 bytes can yield something similar

But when you actually look more closely at the memory layout taking into account the limitations of the corruption primitive:

Computing the relative offsets between two IO80211AWDLPeers next to each other in memory it turns out that a useful partial overwrite of peer_list_flink isn't possible as it lies on a 6-byte boundary from the lower peer's sync_tree_macs array

This

is not a useful type of partial overwrite and it took a lot of effort

to make this heap groom work only to realize in hindsight this obvious

oversight.

Attempting

to salvage something from all this work I tried instead to just

completely overwrite the linked-list pointer. We'd still need some other

vulnerability or technique to determine what we should overwrite with

but it would at least be some progress to see a read or write from a

controlled address.

Alas,

whilst I'm able to do the overflow, it appears that the linked-list of

peers is being continually traversed in the background even when there's

no AWDL traffic and virtual methods are being called on each peer. This

will make things significantly harder without first knowing a vtable

pointer.

Another option would be to trigger the SyncTree overflow twice during the parsing of a single frame. Recall the code in actionFrameReport:

IO80211AWDLPeer::actionFrameReport

...

case 0x14:

if (tlv_cnt[0x14] >= 2)

goto ERR;

tlv_cnt[0x14]++;

this->parseAwdlSyncTreeTLV(bytes);

I

explored places where a TLV would trigger a peer list traversal. The

idea would then be to sandwich a controlled lookup between two SyncTree

TLVs, the first to corrupt the list and the second to somehow make that

safe. There were some code paths like this, where we could cause a

controlled peer to be looked up in the peer list. There were even some

places where we could potentially get a different memory corruption

primitive from this but they looked even trickier to exploit. And even

then you'd not be able to reset the peer list pointer with the second

overflow anyway.

Reset

Thus

far none of my ideas for a read panned out; messing with the linked

list without a correctly PAC'd vtable pointer just doesn't seem

feasible. At this point I'd probably consider looking for a second

vulnerability. For example, in Natalie's recent WebRTC exploit she was

able to find a second vulnerability to defeat ASLR.

There are still some other ideas left open but they seem tricky to get right:

The other major type of object in the kalloc.6144 zone are ipc_kmsg's

for some IOKit methods. These are in-flight mach messages and it might

be possible to corrupt them such that we could inject arbitrary mach

messages into userspace. This idea seems mostly to create new challenges

rather than solve any open ones though.

If

we don't target the same zone then we could try a cross-zone attack,

but even then we're quite limited by the primitives offered by AWDL.

There just aren't that many interesting objects we can allocate and

manipulate.

By